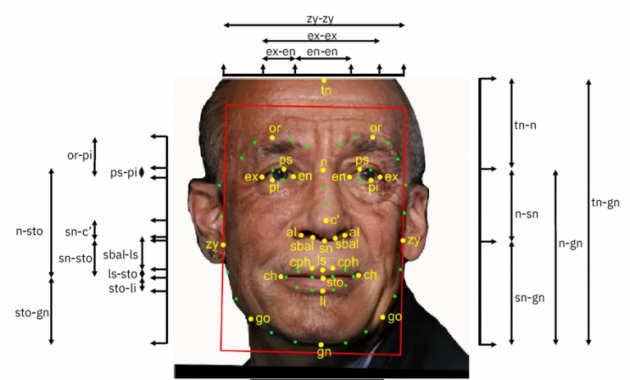

Facing a pair of related lawsuits, Amazon and Microsoft both acknowledge that people working on their respective facial-recognition technologies downloaded IBM’s “Diversity in Faces” photo database — compiled from 1 million photos uploaded by Flickr users — to see if the images improved their AI algorithms.

The answer, both companies insist, was no.

Researchers and others at Amazon and Microsoft determined independently in 2019 that the database wouldn’t help the accuracy their facial recognition technologies, and decided not to use it, lawyers for the companies assert in separate filings in federal court in Seattle.

But the plaintiffs, two Chicago-area men who uploaded thousands of photos to their Flickr accounts under Creative Commons licenses, say the evidence shows Microsoft and Amazon wrongly used their images without written notice and consent, violating their rights under the Illinois Biometric Information Privacy Act.

And it wasn’t just them, they allege: the lawsuits seek class-action status, proposing to represent thousands of people whose pictures were included in the database without their knowledge.

Larger implications: The suits illustrate a series of broader issues facing the tech industry and society, including the lack of a national privacy law in the United States; the tradeoffs inherent to developing accurate artificial intelligence models; and the blurring of jurisdictional lines due to the rise of cloud computing.

Even if they had used the data, Amazon and Microsoft claim, they aren’t subject to Illinois privacy law, because the evidence hasn’t established that the data was downloaded and stored on any servers in the state.

The plaintiffs counter that the law applies anyway, because they live in the state.

Although the photos were uploaded under Creative Commons licenses, lawyers for the plaintiffs say they used versions of the license that prohibited commercial use and required attribution to the source of the images.

New allegations: Both lawsuits were filed in July 2020 in U.S. District Court in Seattle, but recent filings add new claims, based in part on depositions and documents turned over by the companies in the process of discovery.

Lawyers for the plaintiffs, Steven Vance and Tim Janecyk of Tinley Park, Ill., alleged in a pair of filings last week that people working for Amazon and Microsoft did more with the databases than either company initially let on.

Amazon Web Services research scientists “made extensive use of the biometric data” derived from the photos, the plaintiffs say, asserting that the company’s arguments “rest on a skewed version of the factual record.”

Microsoft’s use of the database was “substantial,” they allege, “including but not limited to using metadata contained in the dataset, downloading all of the 1 million photographs linked to therein, running its facial recognition technology on a subset of those faces, and potentially using it evaluate another facial recognition system it was looking to acquire.”

But in an ironic twist, Microsoft and Amazon have persuaded the court to give them their own degree of privacy. Public versions of the latest court filings redact sentences that would otherwise reveal information gleaned by the plaintiffs in the process of discovery, ostensibly to prevent corporate secrets from becoming public.

Privacy vs. accuracy: The suits against Amazon and Microsoft, and related actions against IBM in Illinois and Google in California, demonstrate the tradeoffs between privacy and accuracy in developing data-hungry AI models.

IBM originally released the Diversity in Faces database in January 2019 with the stated goal of making artificial intelligence more fair and equitable across genders and skin colors.

“We believe by extracting and releasing these facial coding scheme annotations on a large dataset of 1 million images of faces, we will accelerate the study of diversity and coverage of data for AI facial recognition systems to ensure more fair and accurate AI systems,” the company said at the time.

The larger challenges of facial recognition have since caused companies across the industry to pull back.

- After revelations of bias and inaccuracy, IBM dropped its general-purpose facial-recognition technology in 2020.

- Both Amazon and Microsoft subsequently suspended the sale of their facial recognition tools to law enforcement.

- In a recent announcement, Microsoft went further, promising to put new limits and controls on the use of facial recognition as part of a broader set of AI principles.

IBM told The Verge that only publicly available images were included in the database, which was accessible only to verified researchers, with the ability for individuals to opt-out of the data set, according to the company.

According to court filings, inconsistencies in the photos were one reason the database wasn’t useful to Microsoft and Amazon. Subjects were looking in different directions, and photographed at variable distances and angles.

Lack of a national privacy law: The lawsuits test the reach of one state’s privacy law, illustrating the complications created by the smorgasbord of state laws that have emerged in the absence of a national privacy laws.

The Information Transparency and Personal Data Control Act, a nationwide privacy bill introduced last year by U.S. Rep. Suzan DelBene, D-Wa, sought to avoid what DelBene called a “patchwork” of state privacy laws.

However, the bill stopped short of addressing the hot-button issue of facial recognition. DelBene, a former Microsoft executive, said at the time that she hoped the legislation might have a better chance of passing as a basic privacy law, saving more complex issues for the future. The legislation stalled anyway.

Microsoft and Amazon are among the companies that have called for national privacy legislation to give them a consistent set of rules across the country.

Legal jurisdiction in the cloud era: In court filings, Microsoft says a contractor and an intern for the company downloaded the IBM “DiF” dataset from locations in Washington state and New York, “quickly determined it was useless for Microsoft’s research purposes, and thus did not use it—for anything.”

The company acknowledges that, hypothetically, an encrypted piece of the database could have been stored in a data center in Chicago if it were uploaded to OneDrive by the contractor or intern. However, the company says it “has no reason to believe the DiF Dataset was ever saved to OneDrive.”

Even if a piece of the database was stored in Illinois, the company asserts that the relevant provision of the state privacy law “regulates only acquisition of data through conduct primarily and substantially in Illinois, not encrypted storage of fragmented data after acquisition,” failing to create legal jurisdiction in its view.

Amazon’s defense on this issue is more straightforward, saying that the database was downloaded by researchers in Washington state and Georgia, and uploaded to the Amazon Web Services US West Region in Oregon.

Lawyers for the plaintiffs disagree with both companies on this issue, citing a ruling by the 9th Circuit Court of Appeals in a separate case against Facebook, calling it “reasonable to infer” that the state legislature meant for the law to apply to Illinois residents “even if some relevant activities occur outside the state.”

The fate of the lawsuits could hinge on the court’s outlook on the issue of jurisdiction. Separate motions for summary judgment by Microsoft and Amazon are pending before U.S. District Judge James Robart in Seattle.

Court filings: The cases are Vance v. Amazon.com Inc (2:20-cv-01084), and Vance v. Microsoft Corporation (2:20-cv-01082). Here are the latest motions and responses from Microsoft, Amazon, and the plaintiffs:

- Microsoft motion for summary judgment, May 2022

- Plaintiffs’ opposition to Microsoft motion, July 2022

- Amazon motion for summary judgment, May 2022

- Plaintiffs’ opposition to Amazon motion, July 2022